By Sofía Pérez

Intro

Real-Time Bidding (RTB) is a common scenario in digital advertising. While a user surfs through the web, different opportunities to present an ad arise. These opportunities are auctioned off among advertisers. Bidding an optimal price is key to improving campaign performances and increasing profits.

This post presents a walkthrough of the RTB process and its challenges. We will specially focus on the advertiser’s side, and present typical strategies to optimize its bidding price.

The rest of this review is organized as follows: In the first section we’ll review some key concepts of the RTB ecosystem. Next we’ll deep dive into some state-of-the-art solutions for Inventory Pricing. Finally we’ll discuss the removal of third-party cookies, its effect on the RTB world and present a possible strategy to overcome it.

How does RTB work?

An RTB auction usually goes like this:

- User navigates through a page. After a certain time, the browser sends a request to load an ad.

- The advertising space is auctioned. The publisher sets a floor price for the space.

- Advertisers evaluate the impression according to their targeting requirements and place their bids.

- If the bidding price is higher than the reserve price, then the advertiser with the highest bid wins the space. The price paid for the space will depend on the type of auction.

- The winner ad is displayed to the user.

To ensure real-time experience SSPs place a very strict time constraint on the RTB process. Any bids received after this deadline are not considered in the auction. The bid response time involves both computation time to perform bidding optimization algorithms as well as network latency. Latency restrictions for the whole process normally range between 80 and 120ms[1][2] depending on the application and the auction type, but it could be even less 😱

Types of auctions

First Price Auction

In this scenario, the highest bidder will win the auction and will be charged exactly that amount. As DSPs are forced to guess how much their competition will bid, the auction can lead to extremely high bidding prices. To avoid overpaying, DSPs need to be aware of the fair market value of the impressions they are bidding on.

Second Price Auction

In this scenario, the participant who makes the higher bid is the winner of the auction and is charged with the second-highest bidding price. When a DSP wins the auction it will know both the winning price and the paying price. Whereas when a DSP loses, it does not receive any response from the ad server and so it has no idea on how high the winning price was. It only knows that it is higher than their bidding price. This scenario is known as “Censorship”.

Bid shading

In order to minimize the risk of overpaying for impressions in first price auction scenarios, the concept of bid shading was introduced. It consists of using predictive algorithms to obtain an optimal bidding price. This mechanism intends to close the gap between what buyers are willing to pay for an impression and what they actually have to. When determining the final price, bid shading algorithms take into account a wide range of factors such as: pricing data, win rates, site, ad size, and competitive dynamics.

The RTB ecosystem

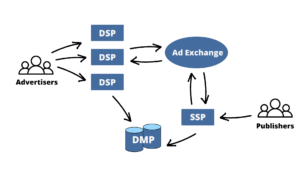

Now, let’s go through the key players in the RTB process.

- Supply-side platforms (SSP) are intended to help publishers (sellers) manage and commercialize their ad impressions. They allow publishers to connect their inventory to multiple ad exchanges and networks at the same time. SSPs receive the ad request from the web browser or application. They then analyze its user information (cookies, location, etc) and pass it to the ad exchange.

- Ad exchanges (ADX) consist of an online marketplace where publishers and advertisers can interact. It aims to simplify the interaction between the two parties.

ADXs receive the information from the SSPs and pass it to the demand-side platforms. - Demand-side platforms (DSP) provide technology to advertisers (buyers) by automating and centralizing the bidding process. They enable the decision-making process to be significantly faster and efficient. DSPs may place multiple bids on behalf of different advertisers based on their preferences.

When a DSP receives the opportunity from the ADXs, it analyzes it and produces a bid on the impression. - Data Management Platforms (DMP) provides user historical data to DSP, SSP and ADX

Bidding price optimization strategies

For a DSP, successfully predicting the winning price, known as Inventory Pricing, is the key to winning the RTB auction. This problem is usually formulated as a forecasting of the probability distribution of the market price at each auction scenario. While in second price auctions, censorship is taken as an additional consideration to the problem.

Traditionally, Survival Analysis – a branch of statistics for analyzing the expected duration of time until one event occurs – was the preferred approach to tackle the optimal bidding price prediction problem. These methods are referred to as Point Estimators as they return a single price value as a recommendation. Thus, they fail to provide a landscape of the price distribution and therefore force the DSPs to directly bid the estimated price, eliminating the possibility of a more strategic bid.

Probabilistic models were then adopted to fulfill the need of incorporating more information in pricing decisions (price landscape), and being able to answer more specific questions such as, “how much should I bid if I want to win a certain amount of impressions? ”[4]. To this matter, recent approaches involve adopting a heuristic assumption of the winning bidding price distribution forms and use models to fit their key parameters. However, these pre-assumptions might severely affect the model’s effectiveness.

| “The appropriate price distribution form may vary for different datasets, and even for different ad dimensions. It is almost impossible to use a fixed distribution assumption to accurately describe the price landscape.” [4] |

Another key aspect of the RTB ecosystem is the low latency requirement to achieve online serving. Solutions not only need to accurately fit the price landscape but also do so with a millisecond-level response – meaning that the algorithms involved should be able to provide an optimal bid in 10ms or even less 🤯. To attain this ambitious response-time requirement, designs usually consist of an offline module for training, which concentrates model complexity; and a simple online module for real-time operations.

Now let’s review some state-of-the-art approaches for this problem, shall we?

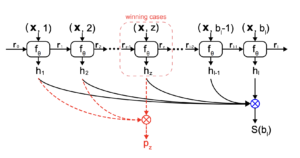

Deep Landscape Forecasting

This technique uses deep learning for modeling bidding price probability distribution and uses survival analysis for censorship handling. It is based on a paper published in 2021: “Deep Landscape Forecasting for Real-time Bidding Advertising”. Here the authors propose a RNN framework to flexibly model the conditional winning probability for each bidding price without any prior assumption required.

In the presented framework the information gathered from the bidding logs is represented as a set of triples (x,b,z):

- x – features of the bid request (user information, ad size, ad position, etc)

- b – proposed bidding price

- z – observed market price (null if the auction has never been won by the DSP)

The modeling is transformed from continuous space to discrete space. Thus, price range is uniformly divided into a set of L intervals Vl. Then, the DLF model is based on a recurrent neural network whose architecture is presented in the figure below.

At each interval Vl the l-th RNN cell predicts the conditional winning probability hl given a certain bid price bl and the request features x. So the RNN function f is a standard LSTM that takes (bl,x) as input and hl as output. The vector rl-1 represents the hidden vector calculated from the last RNN cell. To improve efficiency the price range is splitted into several trivial intervals which linearly increases the LSTM steps in the network.

The introduced solution was tested on two public datasets: YOYI and iPinYou. It was then compared with nine baseline models achieving promising results: a 13% improvement in ANLP (average negative log probability) and C-index (concordance index) metrics on average. However, the resulting response time of this solution falls out of the standard 10ms SLA. Authors stated to have achieved a 22ms average response which might compromise the real-time performance in some scenarios.

Arbitrary Distribution Modeling

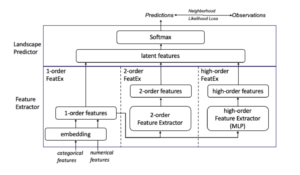

The Arbitrary Distribution Modeling (ADM) framework was presented in 2021 with the publication of the paper “Arbitrary Distribution Modeling with Censorship in Real-Time Bidding Advertising”. In the same line as DLF, this approach also seeks to model bidding price distribution independently for each RTB scenario, but with the novelty of a simplistic architecture in order to achieve efficiency on online inference.

As in the DLF framework, the information gathered from the bidding logs is represented as a set of triples (x,b,z), where the bid request features are further classify in four categories:

- xp – publisher features including information of the website (URL, domain, ad size, etc)

- xu – user features which includes information related to the viewer (age, location, device, etc)

- xa – ad features which represents the information the DSP wants to deliver (industry, genre, ad duration, etc)

- xc – context features which is the neutral information (timestamp, weekday, etc)

The price range is also discretized into buckets as the DLF method did. The width of each bucket depends on the precision required for each specific business. The ADM framework is based on the Multi Layer Perceptron (MLP) model and incorporates a novel function loss regarded as Neighborhood Likelihood Loss (NLL) which helps learning the accurate price landscape. Its simple structure guarantees its efficiency in terms of latency. Based on the assumption that to win a (second-price) auction, the DSP’s bidding prices are usually close to the actual winning prices, the NLL loss function provides a precise direction in guiding the model learning from the observations. As shown the following diagram, the framework consists of two tiers: Feature Extractor and Landscape Predictor.

Feature Extractor (3-layered): First it maps the original features into the latent space, where categorical features are embedded (by one-hot-encoding) and concatenated with normalized numerical features. Then 2nd-order features are extracted from it by the 2nd-order Feature Extractor. For the high-order features, a set of fully connected layers is applied.

Landscape Predictor: concatenates the 1st, 2nd and high-order feature vectors and feeds it into a Softmax layer to predict the probabilities of the winning price falling into each price bucket.

The authors tested their proposed solution using public datasets iPinYou and YOYI, and then compared it with six known baselines, including the DLF model. For ANLP and C-index metrics, ADM outperforms DLF on both datasets. Furthermore the reported execution times were in the order of 5ms for 99% of the test cases, in perfect concordance with the usual 10ms SLA. 😎

Future challenges for the RTB industry

The uprising of Internet anonymity clearly is becoming an arduous challenge for the digital advertising industry. While users are guaranteed an improved tracking free experience through the web, advertisers are forced to find new ways to generate personalized content and to embrace RTB.

As you may have heard, Google has announced the removal of third-party cookies for 2023. Consequently advertisers will not be able to use third-party cookies for tracking users on Chrome browser, which currently represents over 63% of the market share globally.

Traffic-Fingerprints

On this subject, in May 2022 with the submission of the paper “Improving Ads-Profitability Using Traffic-Fingerprints”, the concept of traffic-fingerprints was introduced as a way of representing daily traffic of a web. The main goal was to improve campaign performance without relying on user information – driven by the idea of a third-party cookie’s eradication scenario.

As defined by the authors, traffic-fingerprints consist of normalized 24-dimensional vectors that aim to represent daily website traffic distribution. In other words, the amount of clicks recollected per hour per site. The use of these vectors enables the detection of nonprofitable ad domains.

| “The main goal of the algorithm is to select web pages (domains) that are non profitable and ads should be blocked from appearing on those web pages.” [5] |

Once traffic-fingerprints are collected, the proposed algorithm goes as follows:

- Clusterization: based on traffic-fingerprints domains are divided into groups using k-means clustering.

- Business rules: by analyzing cluster’s statistics and evaluating their performance hourly, business rules are created in order to determine which groups performed worst and in which period of time.

- Temporal blockage: clusters that show poor performance are then temporarily blocked from the RTB algorithms at specific time intervals.

By adopting these strategies, authors reported to have achieved a 40% increase in profit 🤑

Final thoughts

Wrapping up, we have explored the RTB framework and the key concepts behind it. The post aims to highlight what we considered as the most promising solutions in terms of bidding price optimization.

Certainly one of its biggest challenges lies in the extreme time sensitivity involved in DSP’s responses during the auction. In this matter, the Adaptive Distribution Modeling (ADM) framework seems to be an insightful state-of-the-art approach. Also adopting cookie’s free strategies to the framework seems to be a wise move, especially after Google’s announcement.

Real-time bidding (RTB) is a challenging matter, and definitely an excellent opportunity to apply deep-learning techniques. As the industry becomes more mature, more challenges and fruitful research opportunities arise.

References

[1] Google: Best Practices for RTB Applications

[2] How Network Latency affects the RTB process for Adtech

[3] Deep Landscape Forecasting for Real-time Bidding Advertising

[4] Arbitrary Distribution Modeling with Censorship in Real-Time Bidding Advertising